What is entropy? Part 1: A simple definition

What is entropy? Part 1: A simple definition

– by Paul Spoorenberg, 25/06/20

Ever wondered why heat never flows from cold to hot? Why we cannot seem to create a machine with 100% efficiency and why we know our past but not our future? It all has to do with a concept called entropy.

In this blog series we give an easy-to-grasp definition of entropy and the second law of thermodynamics. The latter is one of the all-time great laws of science because it tells us why anything happens at all. From the cooling in a refrigerator to the formation of a thought.

Part one: a simple definition

Part two: birth of the Second Law

Part three: the way the universe will end

The most mysterious of all concepts

Temperature, pressure and energy are familiar concepts. If someone tells you about the pressure and temperature of a cooling machine, it immediately rings a bell.

But if asked about the change in entropy of a system? Well… That’s rather abstract.

What is entropy? With this simple question we are moving into something far more fundamental, even philosophical. This is the point at which the rules of thermodynamics used to calculate the efficiency of a cooling machine reflect the outcome of the universe. And it isn’t pretty.

The analogy of a library

Long story short, entropy says something about the quality of energy. The first law of thermodynamics tells us that the amount of energy in the universe can never be depleted and nor will it grow.

For example, the energy stored in a log of wood is not lost when you ignite it. It just transfers into heat.

You can call energy the currency of physics.

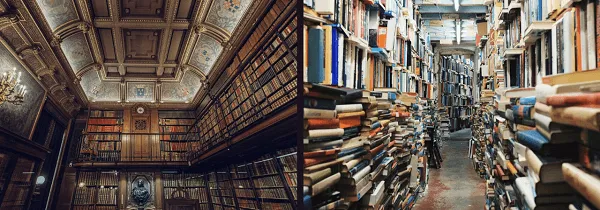

But one amount of energy is not the same as the other. Just as two libraries contain the same number of books, they may differ in quality. In one library, all books are neatly arranged alphabetically on the bookshelves. In another we have a random-stacked pile. Although our two libraries contain the same number of books, they differ in the quality of service they can provide.

Entropy, loosely, is a measure of quality of energy in the sense that the lower the entropy the higher the quality. Energy stored in a carefully ordered way (the efficient library) has lower entropy. Energy stored in a chaotic way (the random-pile library) has high entropy.

You can’t stop it from increasing

That’s a cool analogy, you may think, but what’s in it for me? Why is it important to know energy has different states? Well, this has everything to do with efficiency. We all want the most efficient cooling machine, the most economic car, the most efficient way of extracting energy from the resources provided by the earth and sun.

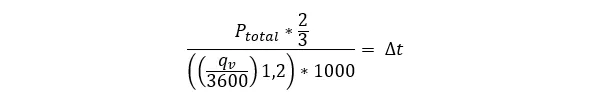

Where there is energy, there is almost certainly heat. In fact, both energy and heat contribute to the change in entropy.

To make things clearer, here is an equation (this’ll be the only equation I promise).

If you’ve made it this far, by reading all these sentences you have been generating heat that is transferred to your surroundings. You are increasing entropy.

Can we also decrease entropy? Of course, by storing energy in a more efficient way. For example, by freezing water in ice cubes. But, by doing so we use energy and create heat. The increase in entropy created by decreasing entropy will always be higher.

Entropy never decreases, it strives to attain a maximum. In short, this is an abstract statement of the second law.

Here we see a glimpse of the beast that is thermodynamics stir under the surface. How can something increase in abundance? Where does it come from? Who or what is pouring entropy into the universe, making spontaneous change possible?

In our next blog we will dive into the history of entropy and see how different scientists built on each other’s theories to reach the final definition of the second law. The law that shows us exactly why a cloud of milk mixes with your coffee and why an ice cube never spontaneously forms in your drink.

Paul Spoorenberg | Sales Manager

Paul Spoorenberg has been working at H&H since 1994. During his career he gained valuable expertise in HVAC solutions for all kind of vessels in the Commercial, Offshore, Naval & Yacht segments.